Feb 2022

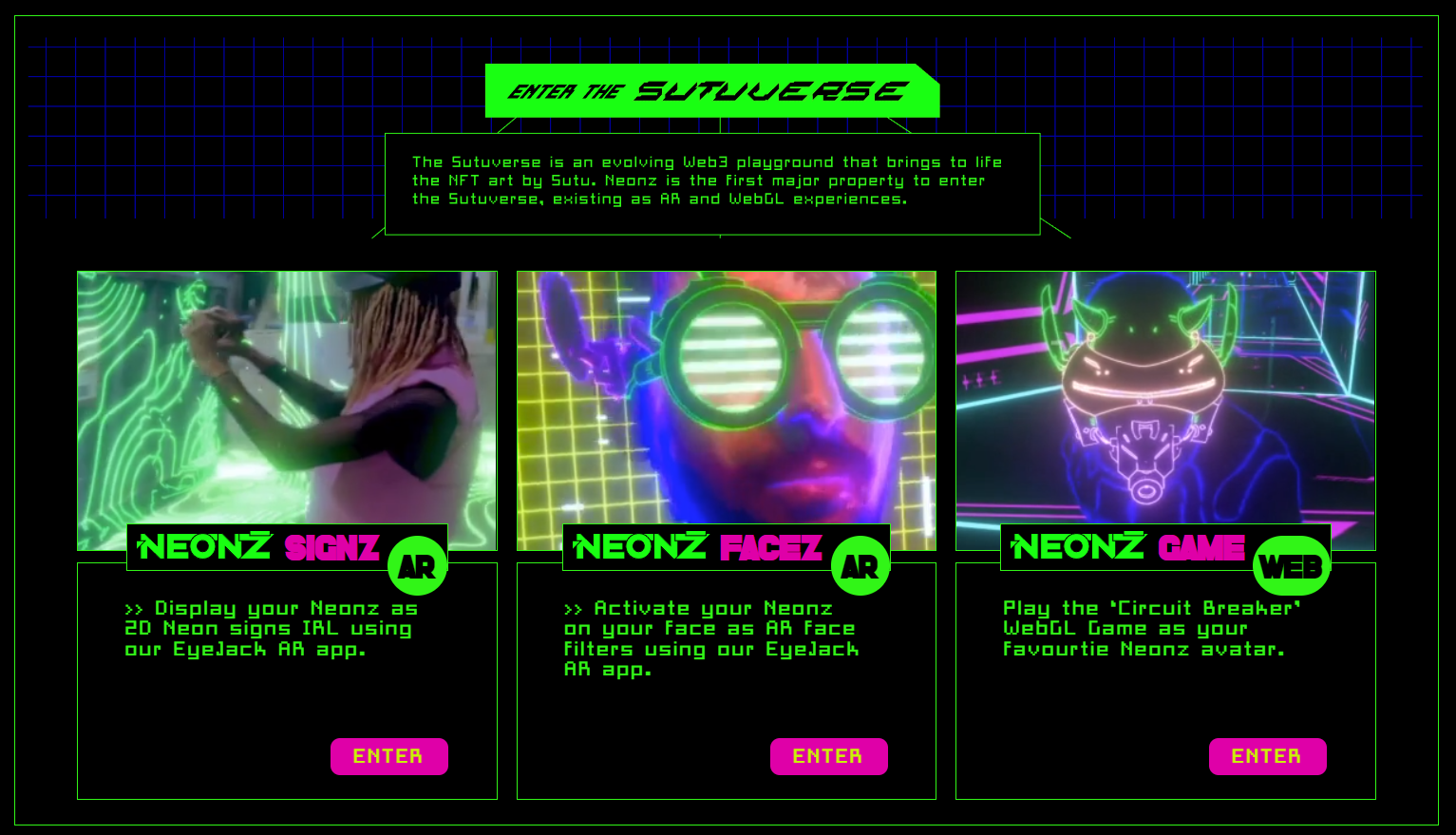

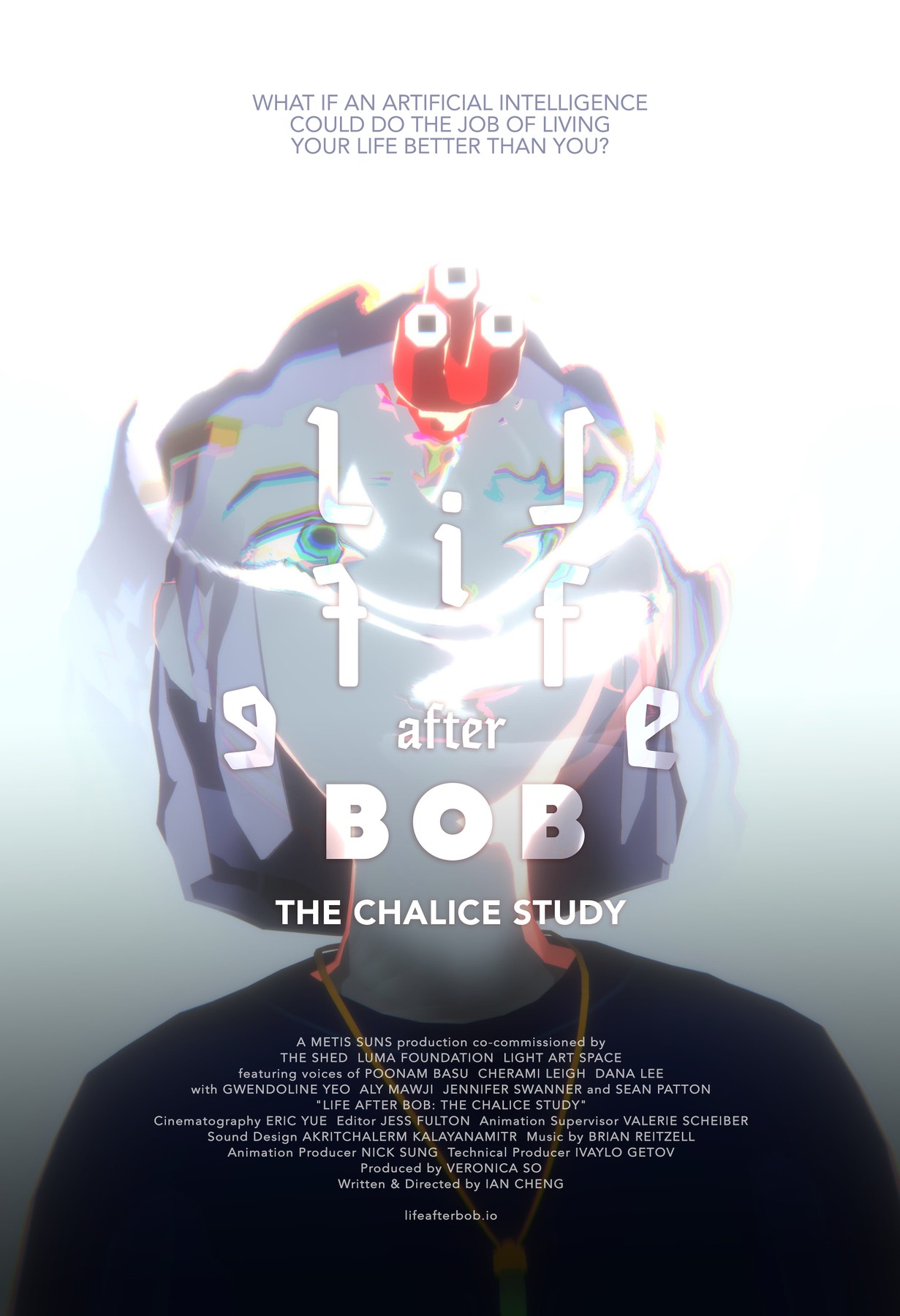

AR NFT Viewer

In 2021, Sutu launched one of the first generative NFT series on the tezos blockshain, a 10,000 profile pic series called Neonz. This was deveoped by the folks at Eyejack, I got on board to help them with some cool AR rendering effects.

I worked on a couple of components of the SUTUVERSE

-

Face filter - https://www.neonz.xyz/neonz-facez

-

NFT ART Viewer - https://www.neonz.xyz/neonz-signz

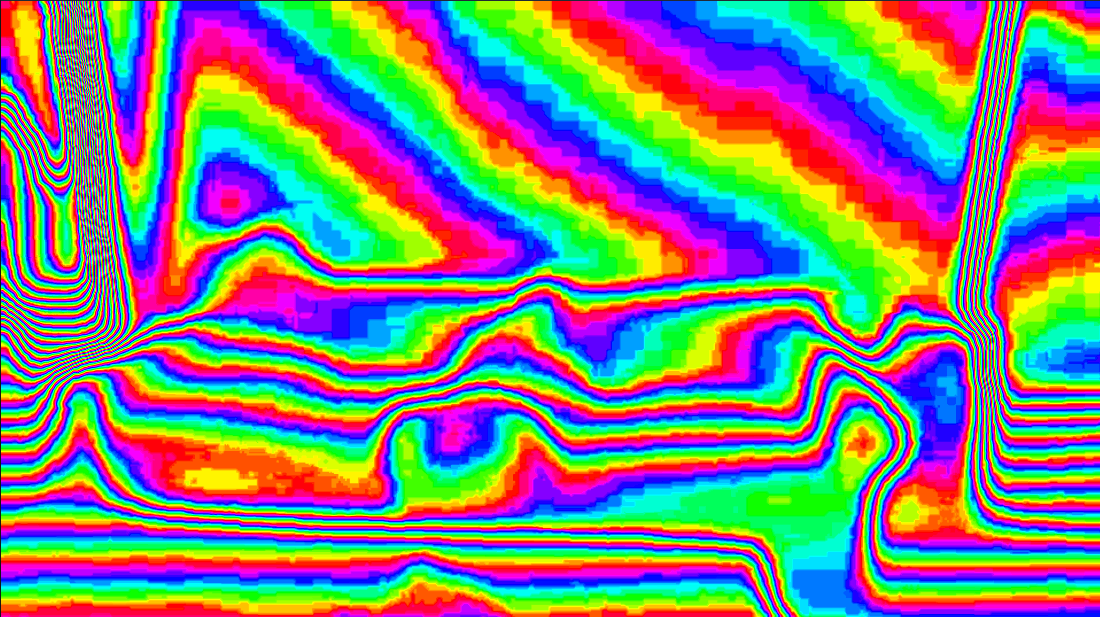

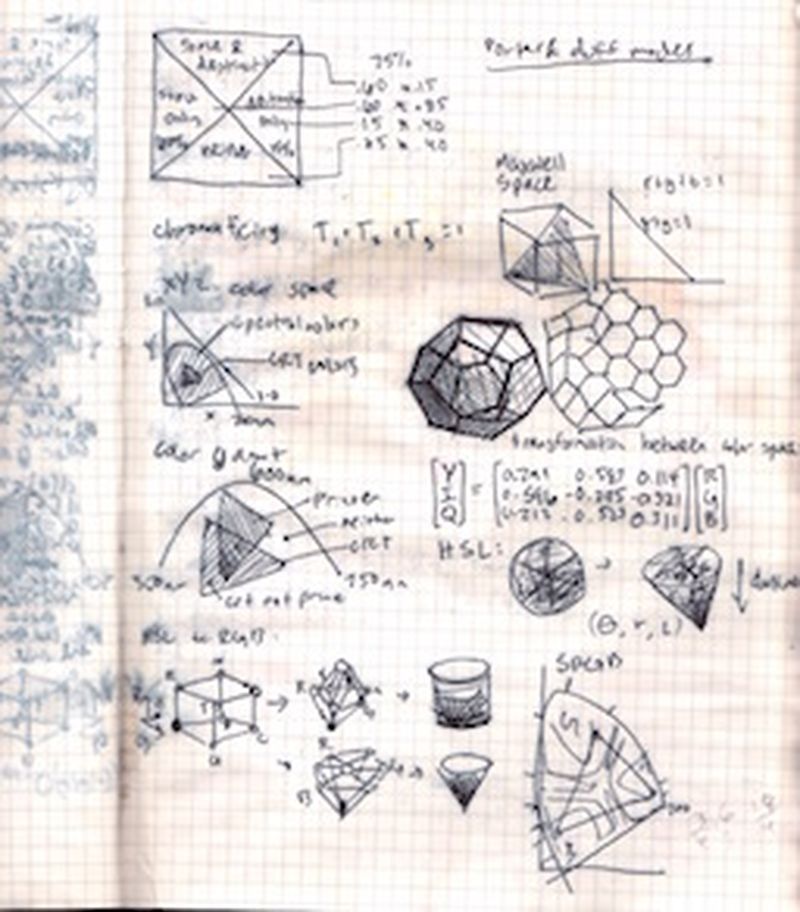

AR Lighting

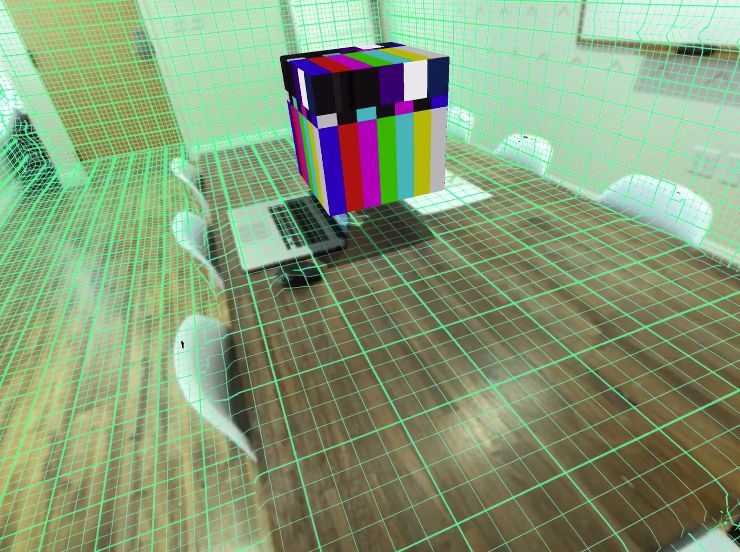

The new ARKit stuff has some awesome new features for spatial perception - you can use either a realtime 3D scanned mesh to composite new types of lighting into a scene. On Android, you can get an estimated depth map, and compute lighting that way as well:

Color and Depth

The depth is not great quality, I think because it does it at a very low res, and then just interpolates. It somehow is both too smooth and too blocky at the same time, which is impressive. I think this was set to the “Fastest” quality so I’m not expecting anything great. (The Android results are even worse)

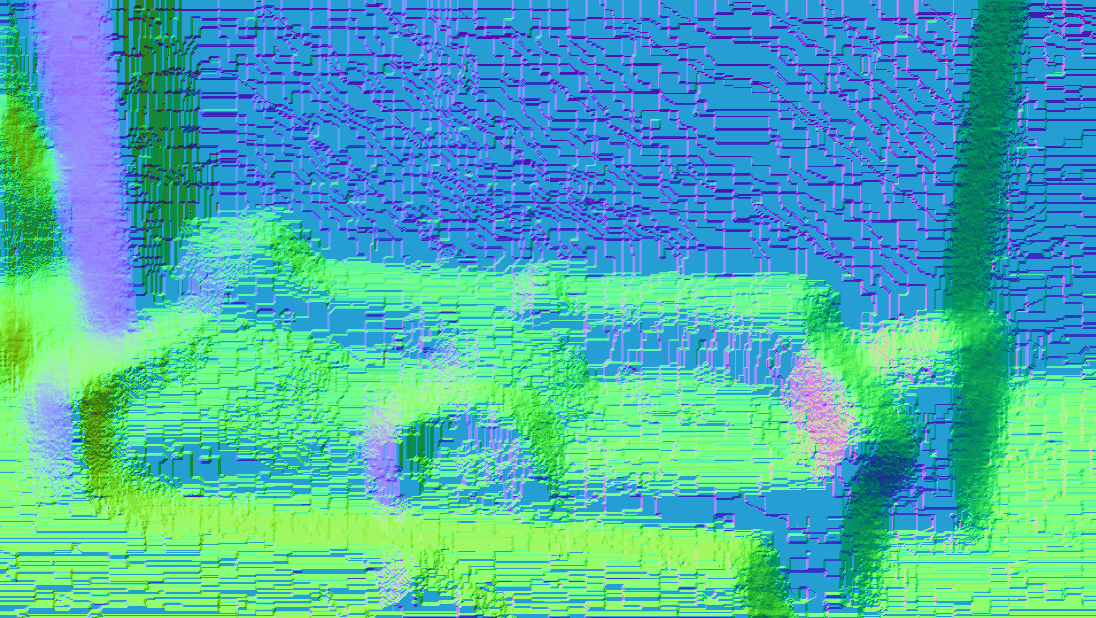

Normals

Using the depth image, you can estimate normals. There’s a lot of blocky artifacts because of the way the depth image is computed, so I do some smoothing.

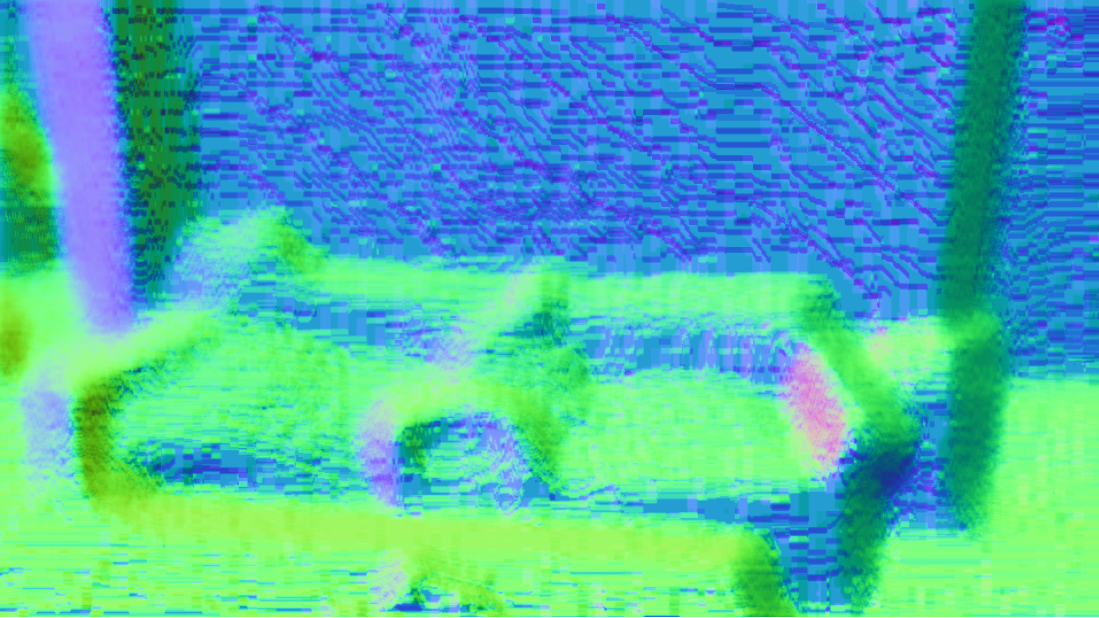

Basic Lights

Directional Light, Point Light

You can then do most kind of lighting calculations you would do with any real time rendering, using the estimated surface normal and the world position.

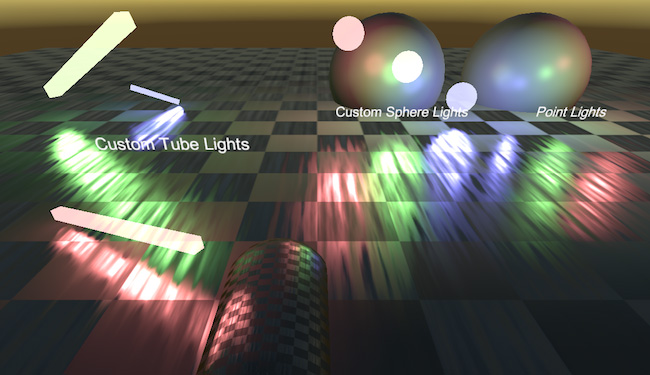

Analytic lights

Then you can compute any type of light and just add it on to the scene.

I have based this example on some unity rendering command buffer samples such as:

https://docs.unity3d.com/2018.3/Documentation/Manual/GraphicsCommandBuffers.html

iOS compatibility

I ran into a very strange issue where I could not get the buffer uploaded to the

Environment effects

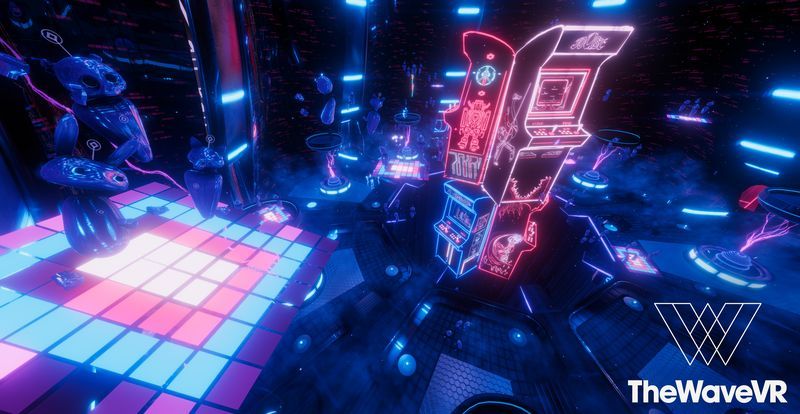

I also created some fun effects for the experience which will add MORE NEON into the world -

And wouldn’t you know these are also released as some

AR Face Filter

FaceTracking

Exhibitions

Exhibited at SXSW and various art galleries (2022).

Tools

Unity, GLSL/HLSL, ARKit, ARCore, WebXR, iOS, Android